23 min read

The Ultimate Guide to Data Architecture

Written by: Micah Horner, Product Marketing Manager, TimeXtender - August 22, 2023

As organizations seek to harness the transformative power of data, the need to build a robust foundation that can withstand the test of time becomes increasingly important. Traditional data architectures, often rigid and inflexible, can quickly become bottlenecks as data volumes surge and technologies advance. In contrast, a future-proof data architecture is designed with adaptability as its guiding principle—capable of accommodating the inevitable shifts in technology, business models, and industry landscapes.

In a landscape where the ability to swiftly pivot, integrate new data sources, and glean meaningful insights can make or break business strategies, future-proof data architecture emerges as an indispensable asset for enterprises striving to remain agile, competitive, and truly data-driven.

By embracing a more agile approach, companies can position themselves to not only address their present data needs but also to seamlessly adapt to the ever-changing technological and market landscape, ensuring their long-term success.

What is Data Architecture?

At its core, data architecture is the blueprint for a company's data infrastructure. It's the systematic arrangement of various components, tools, and processes that enable the ingestion, preparation, and delivery of business-ready data in a structured and efficient manner.

Data architecture involves designing how data flows through an organization, from its sources like databases, APIs, and external systems, all the way to its destinations, such as data warehouses, data lakes, and reporting tools. This design not only ensures data availability but also focuses on its quality, security, and accessibility.

Assess Your Business Requirements

It's essential to emphasize that the journey towards designing a future-proof data architecture begins with a deep understanding of your organization's unique business requirements. Every company has distinct goals, challenges, and data-related needs that must be taken into account.

Your business’s unique needs are the compass that guides the entire process of designing your data architecture, ensuring that your data infrastructure aligns with the goals and objectives of your organization.

Here's a comprehensive look at how to conduct this crucial assessment:

-

Define Objectives: Start by clearly defining the overarching objectives of your organization. What are you aiming to achieve with data-driven insights? Whether it's improving customer satisfaction, optimizing operations, or exploring new markets, having well-defined objectives sets the direction for your data architecture.

-

Identify Stakeholders: Recognize the key stakeholders who will interact with the data architecture. This includes business analysts, decision-makers, data scientists, and more. Understanding their specific needs and responsibilities helps tailor the architecture to suit their requirements.

-

Understand Data Requirements: Dive deep into the types of data required for achieving your objectives. What data sources are essential? What granularity is needed? Are there specific timeframes or historical data that must be considered? This understanding forms the basis for data collection and storage decisions.

-

Quantify Data Volume and Velocity: Estimate the volume of data your organization generates and expects to handle. Additionally, consider the speed at which data is produced and needs to be processed. This helps determine the scalability and processing capabilities required.

-

Analytical Goals: Clearly define the analytical goals you want to achieve. Do you need to perform complex ad-hoc queries, trend analysis, predictive modeling, or real-time reporting? Knowing the analytical requirements influences the design of data models and processing capabilities.

-

Data Quality and Accuracy: Assess the level of data quality required for your objectives. Depending on your industry and goals, data accuracy might be critical. Identify potential sources of data errors and inconsistencies, and plan mechanisms for data cleansing and validation.

-

Compliance and Regulations: Consider any industry-specific regulations or data privacy requirements that apply to your organization. Ensure that your data architecture complies with these regulations to avoid legal and reputational risks.

-

Future Growth: Anticipate how your organization's data needs might evolve over time. As your business expands, the data architecture should accommodate new data sources, increased data volumes, and emerging technologies without requiring extensive redesign.

-

Integration with Existing Systems: Evaluate the systems and tools your organization already uses. Your data architecture should seamlessly integrate with these systems to enable a smooth flow of data and insights.

-

Resource Constraints: Recognize any limitations in terms of budget, manpower, and time. Design a data architecture that strikes a balance between your organization's aspirations and its practical capabilities.

-

Prioritize Use Cases: Not all use cases are of equal importance. Prioritize the analytical scenarios that have the most significant impact on your business goals. This helps allocate resources effectively and ensures that the architecture addresses the most critical needs first.

-

Iterative Feedback: Throughout the assessment process, seek feedback from stakeholders. This iterative approach ensures that the architecture is molded to meet evolving needs and expectations.

By conducting this assessment with meticulous attention to detail, you establish a strong foundation for building a data architecture that empowers your organization with actionable insights and strategic decision-making capabilities.

The 3 Foundational Components of a Modern Data Architecture

There are three foundational layers that form the bedrock of a robust and efficient data infrastructure: ingestion, preparation, and delivery. These layers work together seamlessly to transform raw data into valuable insights, enabling businesses to make informed decisions and stay ahead in today's data-driven landscape.

The division of data architecture into three distinct components is not arbitrary but rather a strategic approach to address the complex and evolving needs of modern businesses. This strategic segmentation addresses specific challenges and maximizes efficiency at each stage of the data lifecycle.

Let's dive into each layer and understand its significance:

1. Ingestion Layer: In the initial layer of data architecture, known as the ingestion layer or data lake, raw data from various sources is collected and stored in its native format. This could include data from databases, applications, external APIs, and more. The data lake acts as a centralized repository where data can be ingested at scale without immediate structuring or transformation. This raw data can be both structured (like tables) and unstructured (like text documents or images).

The primary advantage of the data lake is its flexibility in accommodating diverse data types and formats. It allows organizations to capture large volumes of data without worrying about its exact structure or usage at this stage. However, it's important to note that without proper organization and governance, data lakes can turn into data swamps, making it difficult to retrieve valuable insights.

2. Preparation Layer: Moving on to the preparation layer, also known as the data warehouse, data from the ingestion layer is transformed, cleaned, and structured to make it ready for analysis and reporting. In this stage, data is refined and consolidated to ensure its quality, consistency, and accuracy. This involves tasks like removing duplicates, handling missing values, and aggregating data to a meaningful level.

The data warehouse creates a structured environment where data can be efficiently queried and analyzed. It serves as a foundation for generating insights by providing a well-organized and optimized structure that supports complex querying and reporting operations. Data in the warehouse is typically organized into tables, fact tables containing measures, and dimension tables containing descriptive attributes.

3. Delivery Layer: The delivery layer, often implemented as data marts, is the final stage of the data architecture. It involves creating smaller, specialized subsets of the data warehouse that are tailored to specific business needs or user groups. These data marts focus on providing easily accessible and relevant information to specific departments or roles within the organization.

Data marts are designed to improve query performance for specific types of analysis by pre-aggregating and structuring the data in ways that align with the needs of a particular business function. This optimization makes querying and reporting faster and more efficient for end-users, as they can access the necessary insights without dealing with the complexity of the entire data warehouse.

Selecting Your Data Storage Technology

Choosing the right storage technology is a critical decision that forms the bedrock of your data infrastructure. The storage solution you opt for will influence how effectively your data is managed, accessed, and analyzed. In today's data-driven landscape, where data volumes continue to soar, making an informed choice about storage technology is pivotal to achieving optimal performance, scalability, and cost-efficiency.

When it comes to storage technology, several options are available, each catering to different needs and requirements. Here, we'll examine three prominent cloud storage vendors—Microsoft, Snowflake, and AWS—while highlighting the kinds of storage technology they offer:

Microsoft Azure

- Azure Blob Storage is well-suited for storing unstructured data, such as images, videos, and backups.

- Azure SQL Database offers a fully managed relational database service for structured data storage and querying.

- Azure Data Lake Storage is designed for big data analytics and supports both structured and unstructured data.

Snowflake

Snowflake stands out as a cloud-based data warehousing platform that offers a unique approach to data storage. It separates storage from compute, enabling you to scale each independently.

Snowflake's architecture is designed for data sharing, scalability, and performance. It provides a seamless experience for ingesting, transforming, and querying data, making it an attractive option for organizations looking for a cloud-native, highly scalable data warehousing solution.

Amazon Web Services (AWS)

- Amazon S3 (Simple Storage Service) is one of the most popular cloud object storage solutions, ideal for scalable and cost-effective storage of large volumes of data, backups, and archives.

- Amazon Redshift serves as a fully managed data warehousing service for analytics, providing fast query performance and scalability.

- For semi-structured and structured data, Amazon RDS (Relational Database Service) offers managed database instances.

On-Premises and Hybrid Approaches

In the realm of data architecture, the discussion extends beyond cloud-based solutions to encompass on-premises and hybrid approaches. These alternative strategies offer flexibility and customization that cater to unique organizational needs. Let's delve into on-premises and hybrid approaches, examining their benefits and considerations:

On-Premises Data Storage:

This approach offers distinct advantages:

-

Data Control: With on-premises solutions, you have direct control over your data. This can be crucial for industries with stringent compliance requirements or organizations that prioritize data sovereignty.

-

Customization: On-premises architectures allow for extensive customization to align with specific data processing, security, and integration needs.

-

Predictable Costs: You have better control over cost management, as on-premises solutions typically involve upfront capital expenditures instead of recurring subscription fees.

However, on-premises architectures also present challenges:

-

Initial Costs: Setting up on-premises infrastructure can be capital-intensive, requiring investments in hardware, software licenses, and skilled personnel.

-

Scalability: Scaling on-premises solutions can be complex and time-consuming. Organizations need to accurately anticipate future growth to avoid capacity bottlenecks.

-

Maintenance and Updates: Ongoing maintenance, updates, and security patches are your responsibility, necessitating a dedicated IT team.

Hybrid Data Architecture:

Hybrid data architecture combines on-premises and cloud components to leverage the strengths of both approaches. This approach offers a middle ground:

-

Flexibility: Hybrid architectures allow you to keep sensitive data on-premises while utilizing cloud resources for data processing, analytics, or less sensitive workloads.

-

Scalability: Cloud resources can be easily scaled up or down to accommodate changing data demands, preventing the need to over-provision on-premises hardware.

-

Disaster Recovery: Cloud resources provide robust disaster recovery options, enhancing data resilience.

Yet, hybrid architectures have considerations:

-

Integration Complexity: Managing data flows between on-premises and cloud components requires careful integration planning to ensure seamless operations.

-

Data Security: Hybrid setups require robust security measures to protect data moving between on-premises and cloud environments.

-

Costs and Management: A hybrid architecture introduces a mix of cost structures, including both capital expenditures and operational expenses for cloud services.

Selecting between on-premises, cloud, or hybrid approaches depends on your organization's unique circumstances. While cloud solutions offer scalability and managed services, on-premises architectures provide data control and customization. Hybrid architectures strike a balance, offering flexibility while addressing data privacy and compliance concerns.

Consider factors such as your organization's industry, data sensitivity, budget constraints, and existing IT infrastructure when making this critical decision. Regardless of the approach chosen, a well-designed data architecture remains pivotal in driving data-driven insights and business success.

Tool Selection: Fragmented or All-in-One

Now that you’ve assessed your business’ requirements and selected your storage technology, it's time to select the right tools for building and managing your data infrastructure.

There are two primary approaches: fragmented or all-in-one. Each approach comes with its own advantages and challenges, and your choice should align with your organization's specific needs and resources:

The Fragmented Approach

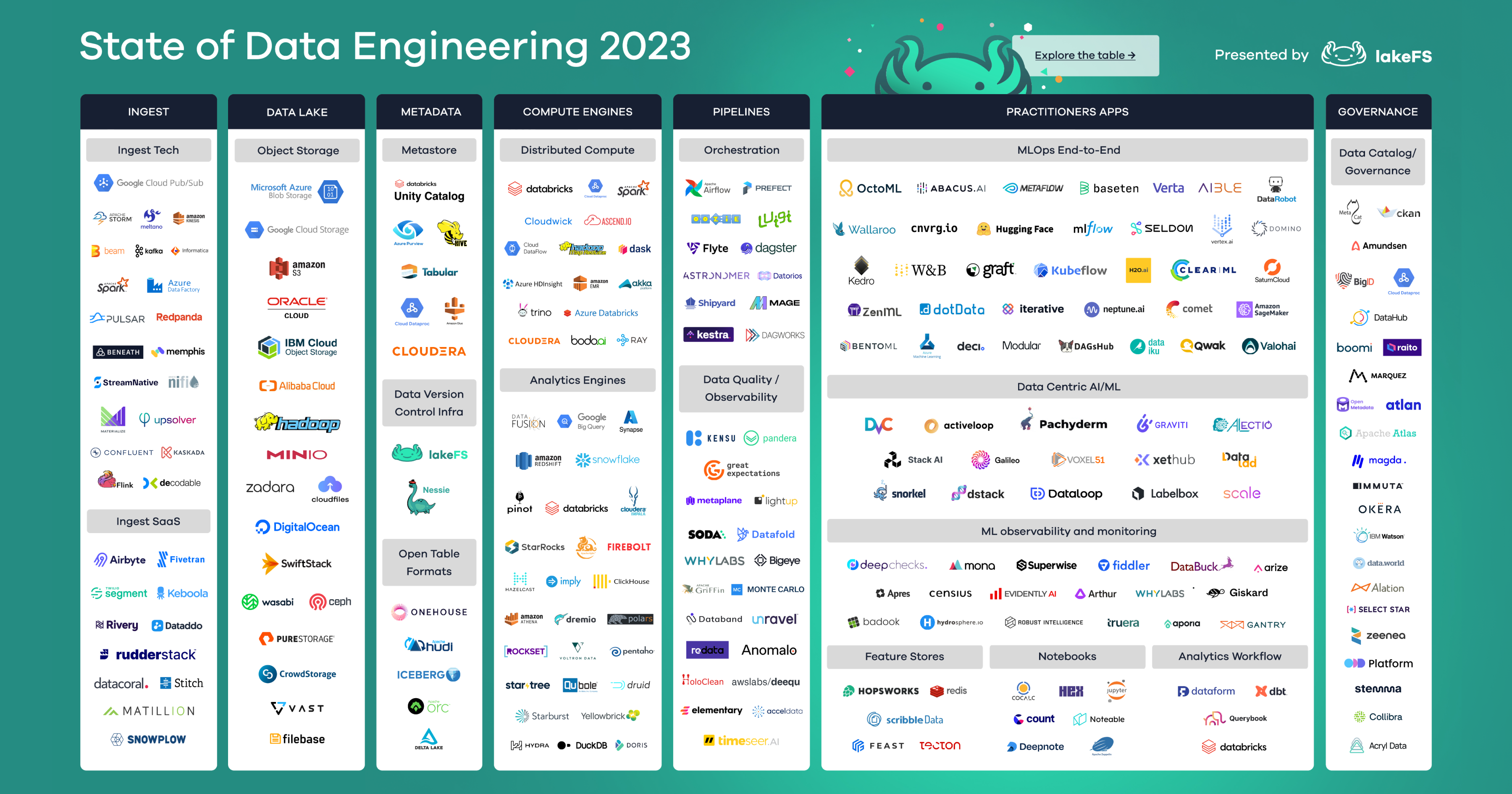

The data architecture landscape has undergone a profound shift, transitioning from traditional approaches and legacy tools to what is now known as the "modern data stack." This evolution was driven by the need for enhanced capabilities and more efficient data processing.

In its essence, the modern data stack approach revolves around assembling a fragmented collection of “best-of-breed” tools and systems to construct a comprehensive data infrastructure.

While the modern data stack holds promise, it has also faced criticism for failing to address key data challenges and creating new complexities. These challenges include:

-

Tool Sprawl: The proliferation of tools within the modern data stack can overwhelm organizations, making it challenging to select the right combination of tools for their specific needs. This leads to data silos, inefficiencies, and poor integration, hampering effective collaboration.

-

Procurement and Billing Challenges: With numerous tools to choose from, navigating procurement, negotiating contracts, managing licenses, and dealing with different billing cycles can be time-consuming and costly.

-

High Cost of Ownership: Implementing and maintaining a complex stack of tools demands specialized expertise and incurs significant expenses related to licensing, infrastructure, training, and support.

-

Lengthy Setup and Maintenance: The touted "plug-and-play" modularity often remains elusive, as the process of setting up, integrating, and maintaining multiple tools remains resource-intensive and time-consuming.

-

Manual Coding: Despite promises of low-code interfaces, manual coding remains a reality for custom data transformations, integrations, and machine learning tasks, adding complexity to an already fragmented stack.

-

Disjointed User Experience: The diverse nature of tools within the stack results in varied interfaces and workflows, making it challenging for users to navigate and learn the entire ecosystem.

-

Knowledge Silos: Teams or individuals often become experts in specific tools, leading to a lack of cross-functional understanding and collaboration, hampering holistic solutions.

-

Staffing Challenges: Many tools require specialized skill sets, making hiring or training staff with these skills a competitive challenge, further escalating costs.

-

Inconsistent Data Modeling: Different tools and systems lead to a lack of consistent data definitions, schema, and semantics, undermining data reliability and analysis.

-

Limited Deployment Flexibility: The stack's emphasis on cloud-native tools may conflict with on-premises or hybrid preferences, limiting deployment options.

-

Vendor Lock-In: Dependence on specific vendors can lead to limited flexibility, innovation, and challenges if migrating data out of a system becomes necessary.

This fragmented nature of this approach has led to the emergence of what is termed "modern data stacks" — tangled, convoluted collections of tools and systems that often hinder rather than empower data initiatives.

These fragmented tool stacks, while seemingly promising, contribute to the accumulation of "Data Debt," where quick fixes create long-term complexities and missed opportunities for growth and innovation.

The All-in-One Approach

In the face of the modern data stack nightmare, the allure of "All-in-One" data management software is undeniable. The companies that develop these tools promise a unified solution, a single platform that can handle all your data needs. No more juggling multiple tools, no more dealing with incompatible systems, and no more wrestling with a monstrous mess of code. Sounds like a dream, right?

However, a closer examination reveals that these platforms often fall short of their promises, leading to a new breed of complexities, limitations, and hidden costs:

-

The Illusion of Unity: While these "All-in-One" platforms market themselves as unified solutions, their reality often falls short. Under a single brand, they bundle disparate tools, each with its own interface and feature set. As a result, users are left dealing with the same fragmented experience they were trying to replace.

-

Acquisition Games: The creation of such platforms frequently involves acquiring various tools, leading to a mismatch of code that's hastily integrated. Despite being presented as a unified platform, these acquired components often lack true compatibility, resulting in a tangled mess of functionality that fails to deliver on the cohesion they promise.

-

Jack-of-All-Trades: Platforms aiming to serve a broad user base often spread their resources thin, compromising the depth and quality of their features. Rather than excelling in specific functionalities, these platforms often end up as a jack of all trades, but a master of none.

-

The Absence of Agility: In a landscape where agility is paramount, these platforms can struggle to ingest, transform, and deliver data swiftly. Their attempt to handle multiple tasks often dilutes their performance, making them slower and less adept at addressing the fast-changing demands of businesses.

-

Hidden Costs: Despite appearing cost-effective at first glance, the true financial impact of these platforms lurks beneath the surface. The initial investment is merely the tip of the iceberg, with additional costs such as ongoing maintenance, upgrades, and unanticipated features contributing to a much larger expenditure than anticipated.

-

Implementation Hurdles: The adoption of such platforms requires considerable investment in training and troubleshooting, due to the complexity associated with multiple integrated tools. Managing what is essentially a concealed data stack demands significant time and resources, adding to the overall expenses and detracting from the perceived cost-effectiveness.

-

Proprietary Prison: In this ecosystem, you're not just using a tool, you're buying into an entire system. This system dictates how you store, manage, and control your data. These tools are rarely data or platform agnostic, meaning they favor certain types of data and storage platforms (Azure, AWS, Snowflake, etc.), and often lack robust support for others. This can create a bottleneck in your data management, hindering your ability to adapt to changing business needs and to integrate with diverse data sources and storage platforms. Most of these tools proudly claim to be “cloud-native”, which is a fancy term for forcing you to migrate all your data to the cloud, leaving you high and dry if you prefer on-premises or hybrid approaches. This can be a significant drawback for organizations with unique needs or complex data infrastructures.

-

Low-Code Lies: While often marketed as user-friendly with "low-code" features, in reality, these platforms usually only offer a handful of functionalities with simplified interfaces. The majority of the platform remains as complex and code-intensive as legacy tools, undermining the promise of ease and accessibility.

-

High Cost of Freedom: The most ominous aspect of embracing an "All-in-One" platform is the steep price of liberation. These platforms don't just limit your data management options; they hold you captive. Should you ever decide to migrate to an alternative solution, be prepared to reconstruct your entire infrastructure from the ground up at significant financial cost.

While the appeal of consolidating tools under one roof is undeniable, the reality often devolves into a fragmented experience with hidden costs, compromised functionalities, and a lack of agility.

Just as the term "modern data stack" refers to the fragmented approach of cobbling together diverse tools, “unified platforms” is the term we use for these all-in-one platforms that have taken on the appearance of unified solutions while essentially being modern data stacks in disguise.

Additional Considerations

As organizations design their data architecture and navigate the complex landscape of tools, it's important to recognize that both fragmented and all-in-one approaches often fail to adequately address several critical aspects of effective data management.

These considerations play a pivotal role in ensuring the integrity, security, and accessibility of data, yet they are frequently overlooked or under-addressed within these approaches:

Governance

Both fragmented and all-in-one approaches often struggle to provide robust governance mechanisms. Effective data governance requires well-defined policies, procedures, and oversight to ensure data accuracy, compliance, and accountability.

In the fragmented approach, disparate tools and systems lack a unified governance framework, leading to inconsistent data practices, quality issues, and compliance risks.

On the other hand, all-in-one platforms might offer superficial governance features but can fall short in supporting nuanced governance needs, such as data lineage tracking and access controls, resulting in inadequate oversight and potential data misuse.

Security

Inadequate security measures are a common pitfall in both fragmented and all-in-one approaches.

In the fragmented model, diverse tools often lack standardized security protocols, leading to vulnerabilities that malicious actors can exploit.

While all-in-one platforms may tout security features, their complexity can lead to oversight or misconfigurations, leaving critical data exposed. Moreover, their "one-size-fits-all" approach may not align with specific security requirements of an organization, creating potential gaps in protection.

Orchestration

Orchestrating data flows efficiently and reliably is crucial for data pipelines.

The fragmented approach struggles with cohesive orchestration, as each tool may have its own workflow, leading to delays, errors, and inconsistent data movement.

All-in-one platforms might offer internal orchestration capabilities, but they can become cumbersome as complexity grows, leading to suboptimal performance and a lack of adaptability.

Observability

In both approaches, maintaining proper observability throughout the data pipeline is often challenging.

In the fragmented model, tracing data across multiple tools and systems can be complex, resulting in blind spots and difficulties in diagnosing issues.

All-in-one platforms may offer basic monitoring capabilities, but they may lack the granularity needed to identify and address problems effectively. The inability to gain comprehensive insights into the entire data pipeline undermines timely troubleshooting and optimization.

Metadata Management

Metadata—the information about data—is pivotal for understanding, managing, and utilizing data effectively.

In the fragmented approach, metadata is often scattered across tools, making it hard to maintain a cohesive understanding of the data landscape.

All-in-one platforms might provide centralized metadata management, but the scope of their coverage can be limited, particularly when integrating external data sources or handling diverse data types. This hampers the ability to derive meaningful insights from data and compromises decision-making.

A Third Option: The Holistic Approach

Given these challenges, organizations of all sizes are now seeking a new solution that can unify the data stack and provide a more holistic approach to data integration that’s optimized for agility.

Such a solution would be...

-

Agile: It should be designed to be fast and flexible. It should be able to adapt to changing business needs and technology landscapes, ensuring that your organization always has a robust infrastructure for analytics and reporting.

-

Unified: It should provide a single, unified solution for data integration. It should not be a patchwork of different tools, but a holistic, integrated approach that’s optimized for agility.

-

Flexible: It should be designed to be technology-agnostic, ensuring that your organization can adapt and grow without being held back by outdated technology or restrictive vendor lock-in.

-

Low-Code: It should offer a single, low-code user interface that avoids the complexity and fragmentation associated with the modern data stack approach. It should be designed to make data integration simple, efficient, and automated.

-

Cost-Effective: It should optimize performance and efficiency, reducing the need for large, specialized teams, ensuring substantial cost savings, and allowing your organization to allocate resources where they truly matter.

By breaking down the barriers of the existing approaches and eliminating the exclusivity that plagues the industry, this new solution could finally unlock the full potential of data for everyone.

We realized we couldn't just sit around and hope for someone else to create such a solution.

So, we decided to create it ourselves.

Meet TimeXtender, the Holistic Solution for Data Integration

TimeXtender provides all the features you need to build a future-proof data infrastructure capable of ingesting, transforming, modeling, and delivering clean, reliable data in the fastest, most efficient way possible - all within a single, low-code user interface.

You can't optimize for everything all at once. That's why we take a holistic approach to data integration that optimizes for agility, not fragmentation. By using metadata to unify each layer of the data stack and automate manual processes, TimeXtender empowers you to build data solutions 10x faster, while reducing your costs by 70%-80%.

TimeXtender is not the right tool for those who want to spend their time writing code and maintaining a complex stack of disconnected tools and systems.

However, TimeXtender is the right tool for those who simply want to get shit done.

From MODERN DATA stack to Holistic Solution

Say goodbye to a pieced-together "stack" of disconnected tools and systems.

Say hello to a holistic solution for data integration that's optimized for agility.

Data teams at top-performing organizations such as Komatsu, Colliers, and the Puerto Rican Government are already taking this new approach to data integration using TimeXtender.

How TimeXtender Helps You Build a Future-Proof Data Infrastructure

Holistic Data Integration

Our holistic approach to data integration is accomplished with 3 primary components:

1. Ingest Your Data

The Ingest component (Operational Data Exchange) is where TimeXtender consolidates raw data from disconnected sources into one, centralized data lake. This raw data is often used in data science use cases, such as training machine learning models for advanced analytics.

-

Easily Consolidate Data from Disconnected Sources: The Operational Data Exchange (ODX) allows organizations to ingest and combine raw data from potentially hundreds of sources into one, centralized data lake with minimal effort.

-

Universal Connectivity: TimeXtender provides a directory of over 250 pre-built, fully-managed data connectors, with additional support for any custom data source.

-

Automate Ingestion Tasks: The ODX Service allows you to define the scope (which tables) and frequency (the schedule) of data transfers for each of your data sources. By learning from past executions, the ODX Service can then automatically set up and maintain object dependencies, optimize data loading, and orchestrate tasks.

-

Accelerate Data Transfers with Incremental Load: TimeXtender provides the option to load only the data that is newly created or modified, instead of the entire dataset. Because less data is being loaded, you can significantly reduce processing times and accelerate ingestion, validation, and transformation tasks.

-

No More Broken Pipelines: TimeXtender provides a more intelligent and automated approach to data pipeline management. Whenever a change in your data sources or systems is made, TimeXtender allows you to instantly propagate those changes across the entire pipeline with just a few clicks - no more manually debugging and fixing broken pipelines.

2. Prepare Your Data

The Prepare component (Modern Data Warehouse) is where you cleanse, validate, enrich, transform, and model the data into a "single version of truth" inside your data warehouse.

-

Turn Raw Data Into a Single Version of Truth: The Modern Data Warehouse (MDW) allows you to select raw data from the ODX, cleanse, validate, and enrich that data, and then define and execute transformations. Once this data preparation process is complete, you can then map your clean, reliable data into dimensional models to create a "single version of truth" for your organization.

-

Powerful Data Transformations with Minimal Coding: Whether you're simply changing a number from positive to negative, or performing complex calculations using many fields in a table, TimeXtender makes the data transformation process simple and easy. All transformations can be performed inside our low-code user interface, which eliminates the need to write complex code, minimizes the risk of errors, and drastically speeds up the transformation process. These transformations can be made even more powerful when combined with Conditions, Data Selection Rules, and custom code, if needed.

-

A Modern Approach to Data Modeling: Our Modern Data Warehouse (MDW) model empowers you to build a highly structured and organized repository of reliable data to support business intelligence and analytics use cases. Our MDW model starts with the traditional "star schema", but adds additional tables and fields that provide valuable insights to data consumers. Because of this, our MDW model is easier to understand and use, answers more questions, and is more capable of adapting to change.

3. Deliver Your Data

The Deliver component (Shared Semantic Layer) is where you create data marts that deliver only the relevant subset of data that each business unit needs (sales, marketing, finance, etc.), rather than overwhelming them with all reportable data in the data warehouse.

-

Maximize Data Usability with a Shared Semantic Layer: Our MDW model can be used to translate technical data jargon into familiar business terms like “product” or “revenue” to create a shared “semantic layer” (SSL). This SSL provides your entire organization with a simplified, consistent, and business-friendly view of all the data available, which maximizes data discovery and usability, ensures data quality, and aligns technical and non-technical teams around a common data language.

-

Increase Agility with Data Marts: The SSL allows you to create department or purpose-specific models of your data, often referred to as “data marts”. These data marts provide only the most relevant data to each department or business unit, which means they no longer have to waste time sorting through all the reportable data in the data warehouse to find what they need.

-

Deploy to Your Choice of Visualization Tools: Semantic models can be deployed to your choice of visualization tools (such as PowerBI, Tableau, or Qlik) for fast creation and flexible modification of dashboards and reports. Because semantic models are created inside TimeXtender, they will always provide consistent fields and figures, regardless of which visualization tool you use. This approach drastically improves data governance, quality, and consistency, ensuring all users are consuming a single version of truth.

Modular Components, Integrated Functionality

TimeXtender's modular approach and cloud-based instances give you the freedom to build each of these three components separately (a single data lake, for example), or all together as a complete and integrated data solution.

Unify Your Data Stack With the Power of Metadata

The fragmented approach of the "modern data stack" drives up costs by requiring additional, complex tools for basic functionality, such as transformation, modeling, governance, observability, orchestration, etc.

We take a holistic approach that provides all the data integration capabilities you need in a single solution, powered by metadata.

Our metadata-driven approach enables automation, efficiency, and agility, empowering organizations to build data solutions 10x faster and drive business value more effectively.

Unified Metadata Framework

TimeXtender's "Unified Metadata Framework" is the unifying force behind our holistic approach to data integration. It stores and maintains metadata for each data asset and object within our solution, serving as a foundational layer for various data integration, management, orchestration, and governance tasks.

This Unified Metadata Framework enables:

-

Automatic Code Generation: Metadata-driven automation reduces manual coding, accelerates development, and minimizes errors.

-

Documentation: The framework captures metadata at every stage of the data lifecycle to enable automatic and detailed documentation of the entire data environment.

-

Data Catalog: The framework provides a comprehensive data catalog that makes it easy for users to discover and access data assets.

-

Data Lineage: It tracks the lineage of data assets, helping users understand the origin, transformation, and dependencies of their data.

-

Semantic Layer: The framework establishes a unified, understandable data model, which we call the “Shared Semantic Layer”. This semantic layer not only simplifies data access but also ensures that everyone in the organization is working with a common understanding and interpretation of the data.

-

Data Quality Monitoring: It assists in monitoring data quality, ensuring that the data being used is accurate, complete, and reliable.

-

Data Governance: It supports holistic data governance, ensuring that data is collected, analyzed, stored, shared, and used in a consistent, secure, and compliant manner.

-

End-to-End Orchestration: It helps orchestrate workflows and ensures smooth, consistent, and efficient execution of data integration tasks.

-

Automated Performance Optimization: It leverages metadata to automatically optimize performance, ensuring that data processing and integration tasks run efficiently and at scale.

-

Future-Proof Agility: It simplifies the transition from outdated database technology by separating business logic from the storage layer.

-

And much more!

Metadata: A Solid Foundation for the Future

Our Unified Metadata Framework ensures that your data infrastructure can easily adapt to future technological advancements and business needs.

By unifying the data stack, simplifying the transition from legacy technologies, and orchestrating workflows, our Unified Metadata Framework provides a strong foundation for emerging technologies, such as artificial intelligence, machine learning, and advanced analytics.

As these technologies continue to evolve, our holistic, metadata-driven solution ensures that your organization remains at the forefront of innovation, ready to leverage new opportunities and capabilities as they arise.

Note: All of TimeXtender’s powerful features and capabilities are made possible using metadata only. Your actual data never touches our servers, and we never have any access or control over it. Because of this, our unique, metadata-driven approach eliminates the security risks, compliance issues, and governance concerns associated with other tools and approaches.

Data Observability

TimeXtender gives you full visibility into your data assets to ensure access, reliability, and usability throughout the data lifecycle.

-

Data Catalog: TimeXtender provides a robust data catalog that enables you to easily discover, understand, and access data assets within your organization.

-

Data Lineage: Because TimeXtender stores your business logic as metadata, you can choose any data asset and review the lineage all the way back to the source database to ensure users their data is complete, accurate, and reliable.

-

Impact Analysis: Easily understand the effects of changes made to data sources, data fields, or transformations on downstream processes and reports.

-

Automatic Project Documentation: By using your project's metadata, TimeXtender can automatically generate full, end-to-end documentation of your entire data environment. This includes names, settings, and descriptions for every object (databases, tables, fields, security roles, etc.) in the project, as well as code, where applicable.

Data Quality

TimeXtender allows you to monitor, detect, and quickly resolve any data quality issues that might arise.

-

Data Profiling: Automatically analyze your data to identify potential quality issues, such as duplicates, missing values, outliers, and inconsistencies.

-

Data Cleansing: Automatically correct or remove data quality issues identified in the data profiling process to ensure consistency and accuracy.

-

Data Enrichment: Easily incorporate information from external sources to augment your existing data, helping you make better decisions with more comprehensive information.

-

Data Validation: Define and enforce data selection, validation, and transformation rules to ensure that data meets the required quality standards.

-

Monitoring & Alerts: Set up automated alerts to notify you when data quality rules are violated, data quality issues are detected, or executions fail to complete.

Low-Code Simplicity

TimeXtender allows you to build data solutions 10x faster with an intuitive, coding-optional user interface.

-

One, Unified, Low-Code UI: Our goal is to empower everyone to get value from their data in the fastest, most efficient way possible — because time matters. To achieve this goal, we’ve not only unified the data stack, we’ve also eliminated the need for manual coding by providing all the powerful data integration capabilities you need within a single, low-code user interface with drag-and-drop functionality.

-

Automated Code Generation: TimeXtender automatically generates T-SQL code for data cleansing, validation, and transformation, which eliminates the need to manually write, review, and debug countless lines of SQL code.

-

Low Code, High Quality: Low-code tools like TimeXtender reduce coding errors by relying on visual interfaces rather than requiring developers to write complex code from scratch. TimeXtender provides pre-built templates, components, and logic that help ensure that code is written in a consistent, standardized, and repeatable way, reducing the likelihood of coding errors.

-

No Need for a Code Repository: With these powerful code generation capabilities, TimeXtender eliminates the need to manually write and manage code in a central repository, such as Github.

-

Build Data Solutions 10x Faster: By automating manual, repetitive data integration tasks, TimeXtender empowers you to build data solutions 10x faster, experience 70% lower build costs and 80% lower maintenance costs, and free your time to focus on higher-impact projects.

DataOps

TimeXtender helps you optimize the process of developing, testing, and orchestrating data projects.

-

Developer Tools: You can extend TimeXtender’s capabilities with powerful developer tools, such as user-defined functions, stored procedures, reusable snippets, PowerShell script executions, and custom code, if needed.

-

Multiple Environments for Development and Testing: TimeXtender allows you to create separate development and testing environments to identify and fix any bugs before putting them into full production.

-

Version Control: Every time you save a project, a new version is created, meaning you can always roll back to an earlier version, if needed.

-

End-to-End Orchestration: Our Intelligent Execution Engine leverages metadata to automate and optimize every aspect of your data integration workflows. As a result, TimeXtender is able to provide seamless end-to-end orchestration and deliver unparalleled performance while significantly reducing costs.

-

Performance Optimization: Enhance your project’s performance with a variety of tools and features designed to streamline your project, optimize database size and organization, and improve overall efficiency.

Future-Proof Agility

TimeXtender helps you quickly adapt to changing circumstances and emerging technologies.

-

10x Faster Implementation: TimeXtender seamlessly overlays and accelerates your data infrastructure, which means you can build a complete data solution in days, not months — no more costly delays or disruptions.

-

Technology-Agnostic: Because TimeXtender is independent from data sources, storage services, and visualization tools, you can be assured that your data infrastructure can easily adapt to new technologies and scale to meet your future analytics demands.

-

Effortless Deployment: By separating business logic from the storage layer, TimeXtender simplifies the process of deploying projects to the storage technology of your choice. You can create your data integration logic using a drag-and-drop interface, and then effortlessly deploy it to your target storage location with a single click!

-

Flexible Deployment Options: TimeXtender supports deployment to on-premises, hybrid, or cloud-based data storage technologies, which future-proofs your data infrastructure as your business evolves over time. If you decide to change your storage technology in the future for any reason (such as migrating your on-premises data to the cloud, or vice versa), you can simply point TimeXtender at the new target location, and it will automatically generate and install all the necessary code to build and populate your new deployment with a single click, and without any extra development needed.

-

Eliminate Vendor Lock-In: Because your transformation logic is stored as portable SQL code, you can bring your fully-functional data warehouse with you if you ever decide to switch to a different solution in the future — no need to rebuild from scratch.

Security and Governance

TimeXtender allows you to easily implement policies, procedures, and controls to ensure the confidentiality, integrity, and availability of your data.

-

Holistic Data Governance: TimeXtender enables you to implement data governance policies and procedures to ensure that your data is accurate, complete, and secure. You can define data standards, automate data quality checks, control system access, and enforce data policies to maintain data integrity across your organization.

-

Access Control: TimeXtender’s allows you to provide users with access to sensitive data, while maintaining data security and quality. You can easily create database roles, and then restrict access to specific views, schemas, tables, and columns (object-level permissions), or specific data in a table (data-level permissions). Our security design approach allows you to create one security model and reuse it on any number of tables.

-

Easy Administration: TimeXtender's online portal provides a secure, easy-to-use interface for administrators to manage user access and data connections. Administrators can easily set up and configure user accounts, assign roles and permissions, and control access to data sources from any mobile device, without having to download and install the full desktop application.

-

Compliance with Regulations & Internal Standards: TimeXtender also offers built-in support for compliance with various industry and regulatory standards, such as GDPR, HIPAA, and Sarbanes-Oxley, as well as the ability to enforce your own internal standards. This ensures that your data remains secure and compliant at all times.

A Proven Leader in Data Integration

TimeXtender offers a proven solution for organizations looking to build data solutions 10x faster, while maintaining the highest standards of quality, security, and governance.

-

Experienced: Since 2006, we have been optimizing best practices and helping top-performing organizations, such as Komatsu, Colliers, and the Puerto Rican Government, build data solutions 10x faster than standard methods.

-

Trusted: We have an unprecedented 97% retention rate with over 3,300 customers due to our commitment to simplicity, automation, and execution through our Xpeople, our powerful technology, and our strong community of over 170 partners in 95 countries.

-

Proven: Our holistic approach has been proven to reduce build costs by up to 70% and maintenance costs by up to 80%. See how much you can save with our calculator here: timextender.com/calculator

-

Responsive: When you choose TimeXtender, you can choose to have one of our hand-selected partners help you get set up quickly and develop a data strategy that maximizes results, with ongoing support from our Customer Success and Solution Specialist Teams.

-

Committed: We provide an online academy, comprehensive certifications, and weekly blog articles to help your whole team stay ahead of the curve as you grow.