12 min read

Traditional Data Pipelines vs Modern Data Flows

Written by: Micah Horner, Product Marketing Manager, TimeXtender - December 8, 2023

Traditional data pipelines, once the backbone of data processing and analytics, have been challenged by the evolving needs of businesses. Modern business environments require greater flexibility, scalability, and integration than traditional systems can offer.

As a result, modern data flows have emerged, designed to overcome the rigidity and high maintenance demands of their predecessors. They are defined by their flexibility, allowing rapid integration of new data sources and swift adaptation to business changes. Utilizing advanced technologies like AI and cloud services, modern data flows enhance automation and orchestration, significantly reducing manual effort and error potential.

This new approach not only optimizes data processing but also makes data more accessible across varied skill levels, democratizing data usage.

Modern data flows are more than just a technological advancement; they represent a fundamental change in how data is managed, aligning with the rapid pace and diverse requirements of the digital transformation era.

The Era of Traditional Data Pipelines

In the early stages of data management, the primary goal of data pipelines was to serve specific, often immediate business needs, like generating one-off reports or addressing unique analytical queries. This specificity meant that each pipeline was tailored to a particular set of requirements, involving unique data sources, formats, and processing logic.

Consequently, the development and maintenance of these pipelines required significant customization. Data engineers had to build and adapt pipelines to cater to each unique use case, which was not only time-consuming but also demanded a high level of expertise and understanding of the specific business context.

This approach resulted in a proliferation of highly specialized pipelines, each with its own set of complexities and maintenance challenges:

Key Characteristics and Limitations

Data pipelines are typically built as a series of interconnected processes, where each step is designed to perform a specific task such as extracting data from a source, transforming it, and then loading it into a target system. This process often involves custom coding and manual configuration, tailored to specific data sources and business requirements. Each component in the pipeline is usually dependent on the preceding one, creating a tightly coupled system.

.png?width=3407&height=1581&name=Data%20Product%20Builder%20(4).png)

The rigid and complex nature of traditional data pipelines led to significant limitations:

-

Complex Web of Pipelines: The traditional approach typically involves creating individual, siloed pipelines for each specific dashboard or report. This approach leads to the creation of a massive web of fragile pipelines, each requiring extensive custom coding and individual maintenance, resulting in a lack of cohesion and governance across the data infrastructure. The need for specialized knowledge and continuous customization increases the risk of errors and inefficiencies, adding to the overall complexity of these systems. This complexity can hinder scalability, adaptability, and efficient data management.

-

Manual Coding: With traditional pipelines, data engineers are often required to write custom code for each step, manually configure components, and adapt the pipeline for unique use cases. This means data engineers need to write and maintain scripts or code for data extraction, transformation, and loading (ETL). This not only consumes a significant amount of time but also demands a deep understanding of both the technical intricacies and the specific business context.

-

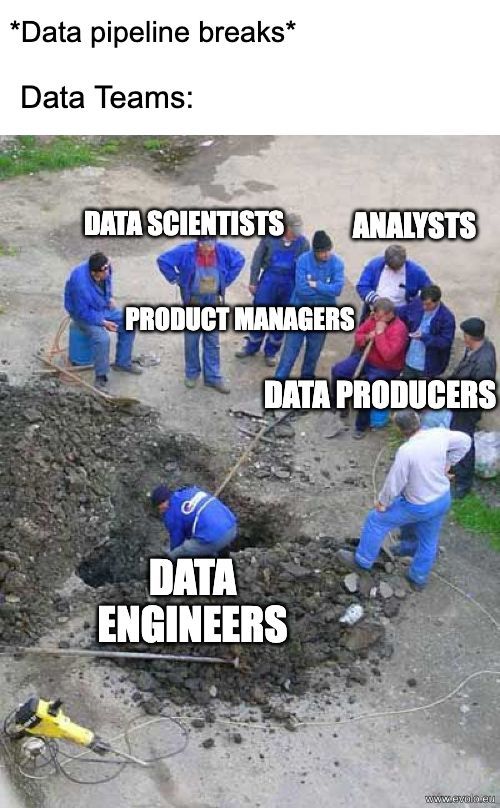

Fragility and Error Propagation: Due to their complex and customized nature, traditional data pipelines are prone to fragility. The tightly coupled nature of these pipelines means that a failure in one component can halt the entire process. Errors introduced at any point in the pipeline can propagate downstream, making it challenging to identify and rectify issues efficiently. This lack of modularity and isolation between pipeline components contributes to their overall fragility.

-

Accumulated Technical Debt: Traditional data pipelines often accumulate technical debt over time. Technical debt refers to the hidden costs and complexities that arise from shortcuts, suboptimal solutions, or the use of custom code in the development and maintenance of data pipelines. As these pipelines evolve, the burden of maintaining and updating custom code can increase, leading to higher long-term costs and a greater risk of errors and inefficiencies. This accumulated technical debt can impede agility and hinder organizations from adapting quickly to changing data needs. In contrast, modern data flows emphasize efficiency, standardization, and automation, reducing the accumulation of technical debt and promoting a more sustainable data integration approach.

-

Resource-Intensive Maintenance: The bespoke nature of traditional data pipelines necessitates ongoing, resource-intensive maintenance efforts. As business needs change, these pipelines often require substantial reworking or even complete rebuilding. Any modifications or updates to data sources, data formats, or business logic can trigger a cascade of changes throughout the pipeline. This not only incurs additional development costs but also increases the risk of introducing errors and inconsistencies into the data processing workflow.

-

High TCO (Total Cost of Ownership): The cumulative costs associated with building, maintaining, and scaling traditional data pipelines can be substantial. These costs include not only the development efforts but also ongoing operational expenses, making the total cost of ownership (TCO) higher compared to more modern and automated data integration approaches.

-

Data Silos and Hindered Collaboration: Traditional pipelines often create data silos as they are tailored to specific use cases or departments. These isolated data sets hinder cross-functional collaboration, preventing organizations from achieving a holistic view of their data. Breaking down these data silos is essential for fostering collaboration and harnessing the full potential of data assets.

-

Limited Data Modeling: Traditional data pipelines typically prioritize data movement and transformation, leaving data modeling as a separate, manual process. This approach limits the integration of data modeling into the data pipeline workflow, potentially leading to discrepancies and inefficiencies when preparing data for analytics or reporting.

-

Inconsistent Data Storage: Traditional data pipelines, being highly specialized, often result in inconsistent data storage practices. Data may be centralized to some extent, but it often lacks a unified and easily accessible repository like a data lake. Data from different sources might be stored separately, leading to challenges in data discovery and consolidation. Inconsistencies in data storage formats and conventions make it difficult to ensure data quality and maintain a unified view of the organization's data.

-

Lack of Scalability: Traditional data pipelines are typically designed with a fixed set of data sources, formats, and processing logic. This design does not easily accommodate changes in data volume, variety, or velocity. As businesses grow and evolve, data needs expand, making these pipelines less adaptable to new requirements. This lack of scalability can result in performance issues, increased risk of breakdowns, and limitations in handling the increasing volumes of data that modern organizations generate.

-

Governance and Compliance Challenges: Ensuring data governance and compliance with traditional data pipelines can be particularly challenging. The diverse data sources and customized nature of these pipelines make it hard to consistently apply governance policies and meet compliance standards. Tracking data lineage, enforcing access controls, and auditing data usage become intricate tasks, increasing the risk of data breaches and regulatory violations.

While traditional data pipelines were pivotal in early data management, their limitations posed significant challenges, paving the way for the development of more advanced data integration solutions.

Emergence of Modern Data Flows

In response to the limitations of traditional data pipelines, the emergence of modern data flows represents a significant evolution in data integration and management. They are designed for flexibility, scalability, and efficiency, aligning with the dynamic needs of modern data environments.

.png?width=4013&height=1800&name=Data%20Product%20Builder%20(1).png)

Differences in Construction and Functionality

-

Modular and Agile Development: Modern data flows are characterized by their modular architecture, where each component serves a specific purpose in the data processing pipeline, such as data ingestion, cleansing, transformation, or delivery. These modular components are designed to be highly reusable and configurable, enabling organizations to respond with agility to changing data landscapes. Unlike the rigid, custom-built nature of traditional pipelines, modern data flows allow for the rapid addition or modification of modules to accommodate new data sources, formats, or evolving business requirements. This flexibility empowers data teams to make changes swiftly, reducing development overhead and accelerating time-to-insight.

-

Holistic Data Integration with Data Fabric: Unlike traditional methods that necessitate separate workflows for each stage of the pipeline, modern data flows seamlessly consolidate data integration into a single, streamlined process. This comprehensive framework, known as “Data Fabric”, ensures that data is collected, cleansed, transformed, and modeled within a single workflow united by metadata. The outcome is the creation of reusable, governed, and business-ready data assets known as "data products." The inclusion of metadata not only eliminates data inconsistencies but also enhances discoverability, lineage tracking, and overall data management, enabling organizations to derive maximum value from their data assets.

-

Automation and Orchestration Enhanced by Metadata: Modern data flows place a strong emphasis on the integration of automation and orchestration within the data integration process. This integration automates key tasks like data ingestion, transformation, and loading, minimizing the need for manual intervention. What sets modern data flows apart is the pivotal role of metadata, which serves as the backbone for automatic code generation. Metadata-driven automation ensures that data workflows are executed precisely, eliminating the need for manual triggers and human intervention. This approach not only accelerates data integration but also enhances its accuracy, empowering organizations to navigate the dynamic data landscape with efficiency and reliability.

-

Adaptability to Changing Data Sources: Modern data flows excel in their ability to swiftly adapt to evolving data sources. Unlike traditional data pipelines that often necessitated extensive manual adjustments and custom coding to accommodate new data sources, modern data flows leverage metadata to understand the structure, schema, and dependencies of data sources. This metadata-driven approach ensures that any changes to data sources or systems are automatically detected and propagated across the entire data flow. As a result, organizations can effortlessly integrate new data sources, ensuring their data ecosystem remains up-to-date and agile.

-

Data Product Creation and Modification: In modern data flows, the focus is on generating reusable data products, which are standardized datasets or data models intended for versatile use across various applications within an organization. This approach enhances operational efficiency, as data products are created once and can be utilized multiple times for different purposes, reducing the need for redundant data processing. Data products can also be easily modified to meet evolving business needs without the need to recreate them from scratch, as they are designed with adaptability in mind.

-

Semantic Layer for User-Friendly Data Access: In modern data flows, a vital distinction is the integration of a semantic layer. This layer serves as a user-friendly abstraction that simplifies the creation, modification, discovery, and usability of data products. It empowers users to interact with data using business-oriented terminology, rendering complex data structures easily understandable. This approach not only improves the accessibility and utility of data but also streamlines data management and governance processes.

-

Improved Collaboration: Modern data flows break down data silos by promoting a holistic view of data within the organization. The availability of data products in the semantic layer fosters a collaborative environment within organizations. With data presented in a user-friendly, business-oriented manner, teams from various departments can effortlessly collaborate and align their efforts. This common understanding of data promotes cross-functional cooperation, allowing diverse teams to work together more effectively on analytics, reporting, and decision-making processes. As a result, improved collaboration leads to better insights, streamlined workflows, and ultimately, more informed and efficient decision-making across the organization.

-

Low-Code Data Preparation: Modern data flows leverage low-code or no-code tools for data cleansing, transformation, and modeling. These tools simplify the data preparation process, enabling users with varying levels of technical expertise to perform these tasks. This stands in stark contrast to traditional data pipelines, which heavily depended on intricate custom coding and manual configuration, demanding specialized expertise and long development cycles.

-

Reduced Technical Debt: Modern data flows, driven by the strategic use of metadata, prioritize efficiency, standardization, and automation. Unlike traditional pipelines that heavily rely on custom code and manual configurations, modern data flows emphasize reusable components and streamlined workflows. This approach significantly reduces long-term maintenance costs and mitigates the risk of errors and inefficiencies associated with bespoke coding. By adopting this holistic and metadata-driven approach, organizations can maintain a sustainable and agile data integration framework, fostering continuous innovation while keeping technical debt to a minimum.

-

Efficient Scalability with Advanced Technology: Modern data flows are designed with scalability as a core feature, enabling them to efficiently manage growing volumes and diverse data types. These data flows harness advanced technologies like cloud-based services and AI-powered data automation to optimize data processing and management. This means that as organizations experience data growth, the infrastructure automatically scales to accommodate the increased demand, all while maintaining high performance. As a result, organizations can seamlessly adapt to expanding data needs without incurring significant infrastructure costs or compromising on data processing efficiency.

-

Enhanced Data Governance and Compliance: While traditional pipelines often lack centralized oversight, modern data flows employ a holistic approach to bolster data governance and compliance efforts. Centralized data integration ensures consistent application of governance policies, encompassing data quality standards and privacy regulations. Simultaneously, decentralized data product management enables teams to take ownership of specific data products, fostering accountability and collaboration. This holistic approach not only minimizes the risk of data breaches and regulatory violations but also instills confidence in data handling practices and regulatory compliance.

In summary, modern data flows, with their focus on robustness, reusability of data products, and enhanced governance, mark a significant step forward from traditional data pipelines, offering organizations a more efficient, scalable, and controlled data management solution.

Best Practices for Transitioning from Traditional Data Pipelines to Modern Data Flows

Transitioning from traditional data pipelines to modern data flows is a strategic process that requires careful planning and execution. To ensure a smooth and effective transition, organizations should follow a series of best practices:

- Assessment and Planning: Begin by conducting a thorough assessment of your current data infrastructure. Identify the limitations of your existing data pipelines and understand how they are impacting your business operations. This step is crucial for establishing a clear vision of what you want to achieve with modern data flows. Develop a detailed plan that outlines the objectives, timelines, and resources required for the transition.

- Training and Skill Development: Since modern data flows often require different skills compared to traditional pipelines, invest in training and upskilling your team. Focus on areas such as metadata management, automation tools, and understanding the new architecture of modern data flows. This ensures that your team is well-prepared to handle the new system effectively.

- Data Governance and Compliance: Establish a strong data governance framework to ensure data quality, security, and compliance with regulatory requirements. This should include policies for data access, usage, and privacy. A well-defined governance framework is essential for maintaining the integrity and reliability of data throughout the transition process.

- Choosing the Right Tools: Selecting the appropriate tools and technologies is critical for the success of modern data flows. Consider tools like TimeXtender that offer a holistic data integration solution, enabling seamless transition and efficient data management. Look for solutions that provide low-code interfaces, automation capabilities, and support for a wide range of data sources and formats.

- Data Migration Strategy: Develop a comprehensive data migration strategy. This involves mapping out how data will be moved from the old system to the new one, ensuring data integrity and minimizing downtime. Pay special attention to data formats, transformations, and the integration of different data sources.

- Testing and Validation: Rigorous testing is essential to ensure that the new data flows are functioning as intended. Conduct extensive testing for data accuracy, performance, and integration with other systems. Validation helps in identifying and addressing any issues before the full-scale implementation.

- Monitoring and Continuous Improvement: Once the modern data flows are in place, establish continuous monitoring to track performance, data quality, and system health. Use insights from monitoring to make iterative improvements and optimize the data flows over time.

- Change Management: Effective change management is crucial for the transition. Communicate the changes and their benefits to all stakeholders to secure their buy-in and cooperation. Provide adequate support and resources to help your team adapt to the new system.

- Documenting Processes and Procedures: Ensure that all processes and procedures related to the new data flows are well-documented. Documentation serves as a valuable resource for training, troubleshooting, and future reference.

By following these best practices, organizations can successfully navigate the transition from traditional data pipelines to modern data flows, leveraging tools like TimeXtender for efficient, scalable, and holistic data integration. This transition not only enhances data handling capabilities but also positions organizations to better meet the evolving demands of the data-driven business landscape.

Holistic Data Integration Tool with TimeXtender

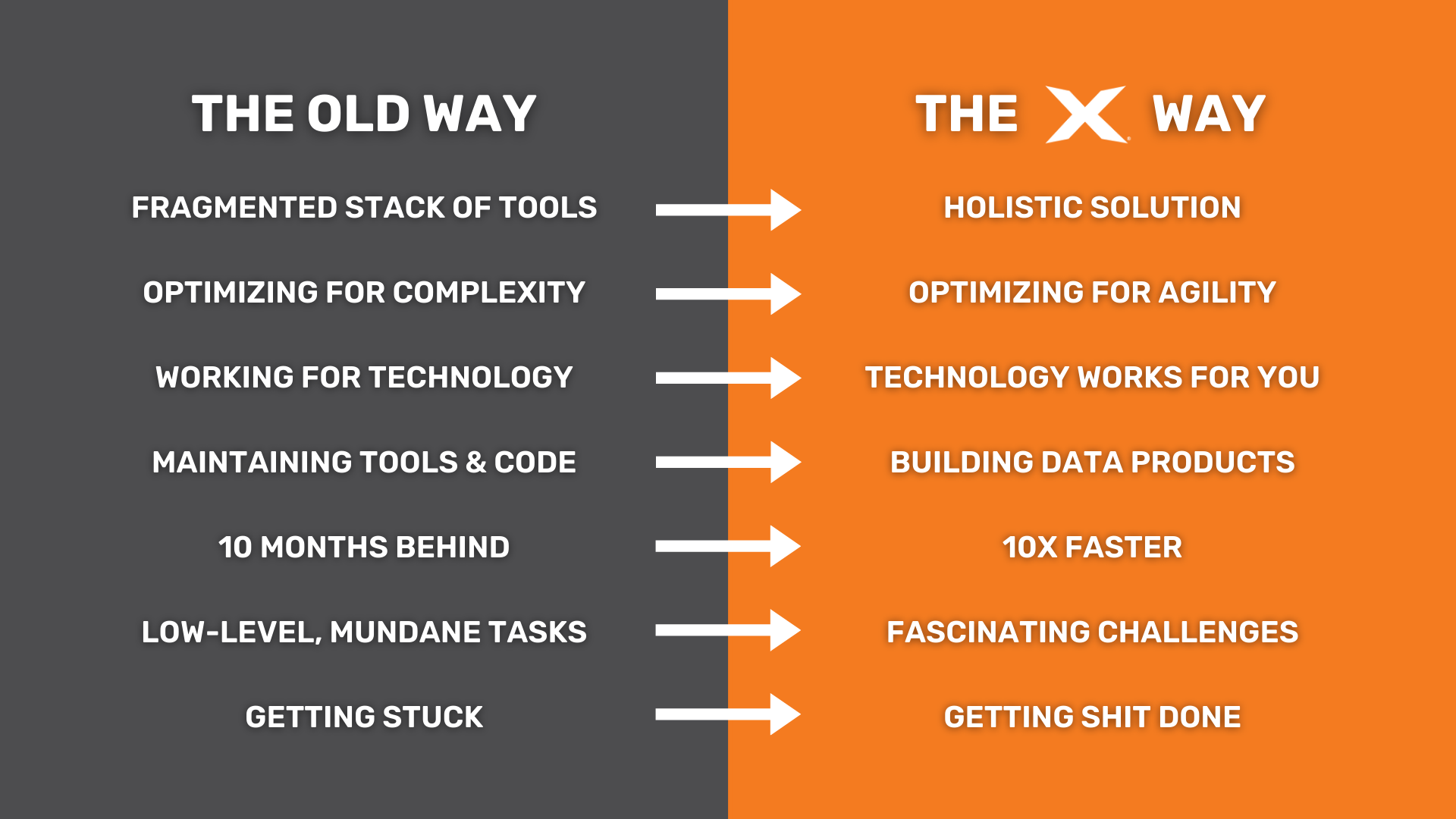

As we’ve discovered, traditional data pipelines often fall short in addressing the dynamic requirements of modern businesses. At TimeXtender, we understand the challenges posed by traditional data pipelines and the need for a more efficient, scalable, and integrated approach to data management.

We offer a holistic solution that overcomes these limitations and propels organizations into a future of efficient and streamlined data integration:

Embracing Modular and Agile Development

TimeXtender's architecture embodies the essence of modular and agile development. Each component of our solution, from data ingestion, to preparation, to delivery, is designed to be highly configurable. This modular structure enables rapid adaptation to changing data landscapes, mirroring the agility and flexibility that modern businesses require. By moving away from the rigid, custom-built nature of traditional pipelines, we facilitate swift changes, reducing development time and accelerating insights.

Data Fabric: The Core of Holistic Integration

Our approach to data integration is rooted in the concept of a Data Fabric. This unified framework ensures that data collection, cleansing, transformation, and modeling occur within a single, metadata-driven workflow. This seamless process results in the creation of "data products" — reusable, governed, and business-ready data assets that streamline data management and enhance value extraction from data.

Automation and Orchestration Powered by Metadata

At TimeXtender, automation and orchestration are central to our data integration process. By leveraging metadata, we automate crucial tasks like data ingestion, transformation, and deployment. This metadata-driven approach eliminates the need for manual intervention, ensuring precise and efficient execution of data workflows. Our focus on automation not only speeds up data integration but also bolsters accuracy and reliability, enabling businesses to navigate the dynamic data landscape with confidence.

Adapting to Evolving Data Sources

Our solution excels in adapting to changing data sources. Unlike traditional pipelines that require extensive manual adjustments, TimeXtender uses metadata to seamlessly integrate changes in data sources or systems. This ensures that your data environment remains agile and up-to-date, effortlessly accommodating new data sources.

Data Product Creation and Modification

TimeXtender focuses on creating adaptable data products. These data products, designed for multiple uses across an organization, enhance operational efficiency by eliminating redundant data processing. They are easily modifiable, ensuring they meet evolving business needs without the need for recreation.

Semantic Layer for Accessibility

We incorporate a semantic layer in our solution, simplifying data access and management. This layer allows users to interact with data in business-oriented terms, making complex structures easily understandable. It not only improves data accessibility but also streamlines governance processes.

Fostering Collaboration Through Data

By breaking down data silos, TimeXtender promotes a holistic view of data within organizations. Our semantic layer enables seamless collaboration across departments, enhancing data-driven decision-making and operational efficiency.

Low-Code Data Preparation for All Users

Our solution provides a low-code user interface for data preparation, standing in stark contrast to traditional pipelines that depend on custom coding. This approach enables users of varying technical levels to efficiently engage in data transformation and modeling.

Reducing Technical Debt

TimeXtender prioritizes reducing technical debt through efficiency, standardization, and automation. By moving away from custom code and manual configurations, we offer a sustainable and agile data integration framework that fosters innovation while minimizing long-term maintenance costs.

Scalability with Advanced Technology

Our solution is designed for efficient scalability, managing increasing data volumes and diversity by leveraging AI-powered automation and integrating with advanced technologies like cloud storage platforms. This scalability ensures that as data needs grow, our infrastructure adapts without incurring significant costs or compromising performance.

Enhancing Data Governance and Compliance

TimeXtender enhances data governance and compliance with a centralized approach to data integration. Our solution ensures consistent application of governance policies, data quality standards, and privacy regulations, minimizing risks and instilling confidence in data practices.

In summary, TimeXtender represents a paradigm shift in data management, aligning with the principles of modern data flows. Our holistic, metadata-driven solution addresses the shortcomings of traditional data pipelines, offering an advanced, flexible, and efficient approach to data integration that is essential for the dynamic demands of contemporary businesses.